By K Raveendran

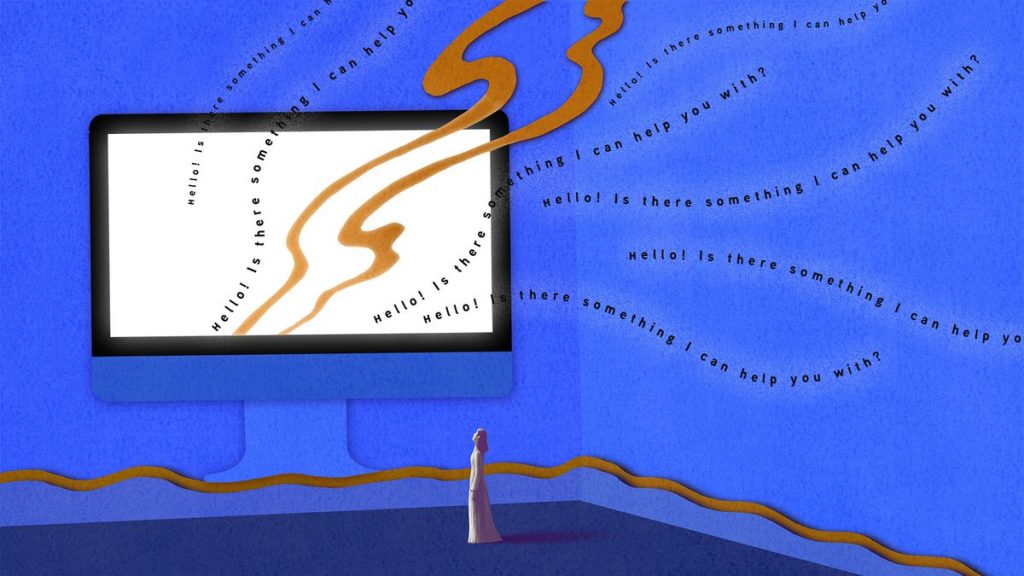

The call by ChatGPT founder Sam Altman a US Senate committee meeting for heavy regulation of the use of artificial intelligence by governments across the world to mitigate the risks from the new technology takes the current debate about generative AI to a new dimension.

While the potential of the new technology to solve some of the biggest problems of humanity is widely appreciated, there has been growing concern about the potential to cause harm. “My worst fear is that we, the technology industry, cause significant harm to the world,” Altman said. “If this technology goes wrong, it can go quite wrong.”

One of the biggest threats is generative AI’s possible misuse for disinformation. The risks of AI-fabricated content could endanger democratic processes while autocratic regimes could use the technology to manipulate election results. This has serious implications for democracies like India’s which has already been facing assaults by governments whose commitment to democracy has been suspect. There is a raging controversy in India about the alleged use of technology for purposes other than those originally envisaged. So, with a more dangerous tool within easy reach, the potential for trouble is beyond comprehension.

Generative AI, capable of creating text, images or videos based on user’s prompts, has demonstrated how dramatically it can reshape industries, businesses, markets and economies. So far the most menacing aspect of AI has been the potential for human replacement. That all repetitive and mechanical tasks would be among the first casualties has been considered as a given. In this respect, AI could be more disruptive than anything that we have already seen, internet and worldwide web included. Tech giant IBM, which has been pioneering machine learning and similar capabilities, announced this week that it was either cancelling or slowing down new recruitments as it believes a lot of these jobs would be taken care of by artificial intelligence. Goldman Sachs has estimated that two-thirds of all US jobs face the axe.

The increasing use of ChatGPT and similar applications has seen some of the technological companies facing the threat of extinction. US education tech company Chegg, a firm that has been eyed by home-grown edutech major Byju’s, has seen its shares nosedive as freely available new chat bots can deliver most of the company has been charging hefty fees all this while for. With its business model threatened beyond redemption, Byju’s itself seems to be in trouble, with its woes added by alleged regulatory violations as well as practices that apparently contravene human rights of its student customers and their parents. The company has been accused of promoting self-doubt among the students so that they will be forced to subscribe to costly packages.

Executives of leading companies have been handing over some of their tasks to the chat bots, which arguably do a better job in working out strategies, making presentations and creating summaries, of course with the threat of sometimes going overboard as the tools source information from all over, irrespective of accuracy and reliability. Tech giant Samsung recently banned its employees from using ChatGPT and similar apps at work.

Disruptive stock broking firm Zerodh’s founder Nithin Kamath said this week that it took just about half an hour to integrate commoditised ChatGPT, see tangible benefits, and realise that more than 20 per cent of jobs could be automated.

“We’ve just created an internal AI policy Zerodha to give clarity to the team, given the AI/job loss anxiety. This is our stance: We will not fire anyone on the team just because we have implemented a new piece of technology that makes an earlier job redundant.”

Elaborating on what AI could do mean for capitalism and humanity, he said it is unlikely that humans will be able to compete with intelligent machines in many walks of life. “I have never done digital art, but it took me a few seconds to create this image of a CEO being replaced by an intelligent machine in the style of Da Vinci,” Kamath said. (IPA Service)

Karnataka Poll Results Show That PM’s Outreach To Muslims Did Not Give Dividends

Karnataka Poll Results Show That PM’s Outreach To Muslims Did Not Give Dividends