Indira Gandhi inaugurated parliament annexe in ’75

Amid all the noise over who should or should not inaugurate the new...

in Politics May 25 ·Karnataka MLAs take oath in the name of their leaders

At the first special legislative session after the formation of the Congress government...

in Politics May 25 ·New Karnataka govt to review bad Bommai ministry orders

Karnataka Minister Priyank Kharge on Wednesday said the orders and legislations enforced under...

in Politics May 25 ·More stories

Opinion

An appeal

The legacy of IPA, founded by Nikhil Chakravartty, the doyen of journalism in India, to keep the flag of independent media flying high, is facing the threat of extinction due to the effect of the Covid pandemic. Only an emergency funding can avert such an eventuality. We appeal to all those who believe in the freedom of expression to contribute to this noble cause.Latest Central Ordinance On Delhi Govt Powers Is An Assault On Federalism

By Prakash Karat In a brazen authoritarian move, the Modi government has promulgated an ordinance to nullify the Supreme Court’s landmark decision on the Delhi government’s control over administrative officers under its jurisdiction. The ordinance gives the control over postings and transfer of officers back to the Lt. Governor...

Thailand’s New Winning Coalition’s Programme Will Have Impact On Myanmar Politics

By Arun Kumar Shrivastav One of the key questions arising from the recent opposition victory in Thailand’s general election on May 14 is the potential impact on the country’s policy towards Myanmar. The progressive Move Forward Party (MFP), which secured the most chairs in the House of Representatives, is...

New Parliament Building Inauguration By Prime Minister On Savarkar’s Birthday Has Significance

By Krishna Jha On May 28, 2023, the new building for our Parliament would be inaugurated. It is the icon of our sovereign democratic Republic, the status achieved after a long arduous struggle for independence from the British colonialism. Those who took part in the struggle for our independence...

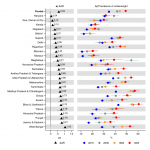

New Estimate Shows Stunting And Wasting Very High In India

By Dr. Gyan Pathak New estimates show that thresholds of stunting and wasting of children in India under 5 years is very high at 31.7 per cent and 18.7 per cent respectively, against the global average of 22.3 and 6.8 per cent. In absolute term India had 36138100 stunted...

Why Is Prime Minister Narendra Modi Adamant On Inaugurating New Parliament Building?

By Sushil Kutty Twenty opposition parties will boycott the new Parliament building unveiling. They don’t want the honourable Prime Minister act the ‘Inauguration Minister’, which is a boringly familiar sight ever since 2014. Prime Minister Narendra Modiji’s appetite for cutting ribbons and laying foundation stones is inelegantly voracious. It...

Kerala LDF Govt Makes Big Developmental Strides

By P. Sreekumaran THIRUVANANTHAPURAM: The Left Democratic Front (LDF) Government headed by Chief Minister Pinarayi Vijayan, which has just completed two years of its tenure, has taken big strides on the development front. The most significant achievement has been the success in erasing the perception of the LDF Government...

52 Years After Army Massacre Of Bengalees, Pakistan Yet To Apologise

By Ashis Biswas Long nurtured international prejudices die hard, if at all: However, the unrelenting Bangladeshi diplomatic initiative of recent years, seeking global condemnation of the Pak –sponsored mass slaughter of innocent Bengali civilian population during the 1971 freedom struggle, is beginning to pay off at last. The liberation...

Cape Cobra Under Pilot’s Seat Is Not Uncommon

By Girish Linganna Only about a month ago, on Monday, April 4 this year, a South African pilot named Rudolf Erasmus gathered applause from aviation experts for safely making an emergency landing after an extremely venomous Cape Cobra reared its head in the cockpit midflight, news agency PTI had...

The President, Not The Prime Minister Is Best Suited To Inaugurate New Parliament Building

By S.N. Sahu Nineteen Opposition political parties – the Indian National Congress, the All India Trinamool Congress, the Dravida Munnetra Kazhagam, the Aam Aadmi Party, the Janata Dal (United), the Nationalist Congress Party, the Shiv Sena (Uddhav Balasaheb Thackeray), the Communist Party of India (Marxist), the Samajwadi Party, the...

Worker Empowerment Stalls In Venezuela As Left Coalition Unity Breaks Down

By W. T. Whitney Jr. Hugo Chavez, Venezuela’s president from 1999 until 2013, inspired and led a “Bolivarian Revolution” that sought independence from U.S. domination, regional integration, and so-called “socialism of the 21st century.” The obstacles that lay before these goals have been many: capitalism in control of the...